Scientific articles aren’t published until they’ve undergone peer review. When a manuscript is submitted to a scientific journal, the editor asks several experts to read it and provide their recommendation. All my papers were reviewed and most were accepted and published, although usually after a revision. Today, I’ll tell you about one of my manuscripts that did not survive peer review. I’m glad it didn’t.

In the early 1990s, I was browsing in the library at the National Institutes of Health—where I worked—and stumbled upon an article by Egbert Dekker about the dip in the anodal strength-interval curve.

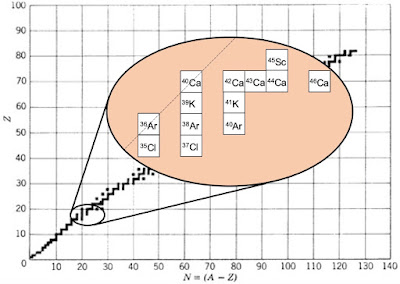

Dekker, E. (1970) “Direct Current Make and Break Thresholds for Pacemaker Electrodes on the Canine Ventricle,” Circulation Research, Volume 27, Pages 811–823.In Dekker’s experiment, he stimulated a dog heart twice: first (S1) to excite an action potential, and then again (S2) during or after the refractory period. You expect that for a short interval between S1 and S2 the tissue is still refractory, or unexcitable, and you’ll get no response to S2. Wait a little longer and the tissue is partially refractory; you’ll excite a second action potential if S2 is strong enough. Wait longer still and the tissue will have returned to rest; a weak S2 will excite it. So, a plot of S2 threshold strength versus S1-S2 interval (the strength-interval curve) ought to decrease.

Dekker observed that the strength-interval curve behaved as expected when S2 was provided by a cathode (an electrode having a negative voltage). A positive anode, however, produced a strength-interval curve containing a dip. In other words, there was an oddball section of the anodal curve that increased with the interval.

|

| The cathodal and anodal strength-interval curves. |

Moreover, Dekker observed two types of excitation: make and break. Make occurred after a stimulus pulse began, and break after it ended.

Both anodal and cathodal stimuli could cause make and break excitation. (For more about make and break, see my previous post.)

I decided to examine make and break excitation and the dip in the anodal strength-interval curve using a computer simulation. The bidomain model (see Section 7.9 in Intermediate Physics for Medicine and Biology) represented the anisotropic electrical properties of cardiac tissue. The introduction of the resulting paper stated

In this study, my primary goal is to present a hypothesis for the mechanism of the dip in the anodal strength-interval curve: The dip arises from a complex interaction between anode-break and anode-make excitation. This hypothesis is explored in detail and supported by numerical calculations using the bidomain model. The same mechanism may explain the no-response phenomenon. I also consider the induction of periodic responses [a cardiac arrhythmia] from a premature anodal stimulus. The bidomain model was used previously to investigate the cathodal strength-interval curve; in this study, these calculations are extended to investigate anodal stimulation.When I submitted this manuscript to a journal, it was rejected! Why? It contained a fatal flaw. To represent how the membrane ion channels opened and closed, I had used the Hodgkin and Huxley model, appropriate for a nerve axon. Yet, the nerve and cardiac action potentials are different. For example, the action potential in the heart lasts a hundred times longer than in a nerve.

After swearing and pouting, I calmed down and redid the calculation using an ion channel model more appropriate for cardiac tissue, and then published a series of papers that are among my best.

Roth, B. J. (1995) “A Mathematical Model of Make and Break Electrical Stimulation of Cardiac Tissue by a Unipolar Anode or Cathode,” IEEE Transactions on Biomedical Engineering, Volume 42, Pages 1174-1184.I kept a copy of the rejected paper (you can download it here). It’s interesting for what it got right, and what it got wrong.

Roth, B. J. (1996) “Strength-Interval Curves for Cardiac Tissue Predicted Using the Bidomain Model,” Journal of Cardiovascular Electrophysiology, Volume 7, Pages 722-737.

Roth, B. J. (1997) “Nonsustained Reentry Following Successive Stimulation of Cardiac Tissue Through a Unipolar Electrode,” Journal of Cardiovascular Electrophysiology, Volume 8, Pages 768-778.

What it got right: The paper identified make and break regions of the strength-interval curve, predicted a dip in the anodal curve but not the cathodal curve, and produced reentry for strong stimuli near the make/break transition. It even reproduced the no-response phenomenon, in which a strong stimulus excites an action potential but an even stronger stimulus does not.

What it got wrong: Cathode-break excitation was missing. The mechanism for anode-break excitation was incorrect. The Hodgkin-Huxley model predicts that anode-break excitation arises from the ion channel kinetics (for the cognoscenti, hyperpolarization removes sodium channel inactivation). This type of anode-break excitation doesn’t happen in the heart but did occur in my simulations, leading me astray. This wrong anode-break mechanism led to wrong explanations for the dip in the anodal strength-interval curve and the no-response phenomenon. (For the correct mechanism, look here.)

Below I reproduce the final paragraph of the manuscript, with the parts that were wrong in red.

“What useful conclusions result from these simulations?” is a fair question, given the limitations of the model. I believe the primary contribution is a hypothetical mechanism for the dip in the anodal strength-interval curve. The dip may arise from a complex interaction of anode-break and anode-make stimulation: A nonpropagating active response at the virtual cathode raises the threshold for anode-break stimulation under the anode. The same interaction could explain the no-response phenomenon. A second contribution is a hypothesis for the mechanism generating periodic responses to strong anodal stimuli: Anode-make stimulation cannot propagate back toward the anode because of the strong hyperpolarization, and the subsequent excitation of the tissue under the anode occurs with sufficient delay that a reentrant loop arises. This hypothesis is related to, but not the same as, the one presented by Saypol and Roth for cathodally induced periodic responses. These mechanisms are suggested by my numerical simulation using a simplified model; whether they play a role in the behavior of real cardiac tissue is unknown. Hopefully, my results will encourage more accurate simulations and, even more importantly, additional experimental measurements of the spatial-temporal distribution of transmembrane potential around the stimulating electrode during premature stimulation of cardiac tissue.Even though this manuscript was flawed, it foreshadowed much of my research program for the mid 1990s; it was all there, in the rough. Moreover, in this case the reviewers were right and I was wrong. At the time, I was angry that anyone would reject my paper. Now, in retrospect, I realize they did me a favor; I benefited from their advice. For any young scientist who might be reading this post, don’t be too discouraged by critical reviews and rejection. Give yourself a day to whine and fuss, then fix the problems that need fixing and move on. That’s the way peer review works.